Hi,

My question certainly stems from the imposter syndrome that I am living right now for no good reason, but when looking to resolve some issues for embedded C problems, I come across a lot of post from people that have a deep understanding of the language and how a mcu works at machine code level.

When I read these posts, I do understand what the author is saying, but it really makes me feel like I should know more about what’s happening under the hood.

So my question is this : how do you rate yourself in your most used language? Do you understand the subtilities and the nuance of your language?

I know this doesn’t necessarily makes me a bad firmware dev, but damn does it makes me feel like it when I read these posts.

I get that this is a subjective question without any good responses, but I’d be interested in hearing about different experiences in the hope of reducing my imposter syndrome.

Thanks

With about 12 years in my primary language I’d say my expertise is expressed in knowing exactly what to Google…

This is probably the true highest level of expertise you’ll get out of most professional coders.

It takes a real monk level of confinement to understanding the language to break out of being proficient in looking shit up and start being proficient in being the person that writes the shit people are looking up.

I’ve learned a lot by breaking things. By making mistakes and watching other people make mistakes. I’ve writing some blog posts that make me look real smart.

But mostly just bang code together until it works. Run tests and perf stuff until it looks good. It’s time. I have the time to write it up. And check back on what was really happening.

But I still mostly learn by suffering.

But I still mostly learn by suffering.

That resonates so much. Almost every time someone is deeply impressed with something I know, it brings back a painful memory of how I learned it.

I really like brain twisters. It can get frustrating at times, but it’s the most fun out of the profession to me.

Knowing the footguns in your language is always useful. The more you know, the less you’ll shoot your foot.

I think that one of my issue is that I’d like to be more knowledgeable to the smaller bits and bytes of C, but I don’t have the time at work to go deeper and I don’t have any free time because I have young kids.

I don’t have any free time because I have young kids.

That’s a healthy thing to acknowledge.

It’s a brutal phase for professional development, hobbies, free time, sex, basic housekeeping…

It gets better as the little ones grow.

At least, we know emotionally that it will get better with the second one haha, even if the day to day is rought.

With the first one, it felt like we would never get to the other side of it. But we did and we will for the second one.

I am eager to learn new things, so having so little free time is definitely tough. And the lack of sleep/energy makes it even harder.

Thanks for the encouragement, it’s nice to be acknowledged by someone else that went through the same thing. We often forget that we are not alone and a lot of people got through it before us.

I don’t know about your workplace, but if at all possible I would try to find time between tasks to spend on learning. If your company doesn’t have a policy where it is clear that employees have the freedom to learn during company time, try to underestimate your own velocity even more and use the time it leaves for learning.

About 10 years ago I worked for a company where I was performing quite well. Since that meant I finished my tasks early, I could have taken on even more tasks. But I didn’t really tell our scrum master when I finished early. Instead I spent the time learning, and also refactoring code to help me become more productive. This added up, and my efficiency only increased more, until at some point I only needed one or two days to complete a week’s sprint. I didn’t waste my time, but I used it to pick up more architectural stuff on the side, while always learning on the job.

I’ll admit that when I started this route, I already had a bunch of experience under my belt, and this may not be feasible if you have managers breathing down your neck all the time. But the point is, if you play it smart you can use company time to improve yourself and they may even appreciate you for it.

I work in a startup, so I’d say that almost every day, I learn something new. So I don’t really need to look in-between tasks because a lot of tasks bring new challenges.

When I worked in corpos, my job was restricted to the same tasks and specific knowledge. Now it’s the opposite where I need to learn what I need to create a feature or fix an issue.

I guess that lately, a lot of new things have popped up and I need to absorb a lot of information to implement the features I need. And that is probably what is triggering the imposter syndrome.

Thanks for the insight, it is appreciated.

There’s a lot to talk about from this point alone, but I’ll be brief: having gone through university courses on processor design and cutting my teeth on fighting people for a single bit in memory, I’m probably a lot more comfortable with that minutia than most; having written my first few lines of C in 10 years to demo a basic memory safety bug just an hour ago, you’re way way ahead of me.

There are different ways to learn and gain experience and each path will train us in different skills. Then we build teams around that diversity.

Thanks for the insight. I guess one thing that causes my imposter syndrome is that I want to know how everything works in details.

I agree that for other people, what I know seems like magic to them. It’s easy to look at what we don’t know, but we don’t take the time to appreciate how far we’ve come. We should do that more often.

how do you rate yourself in your most used language?

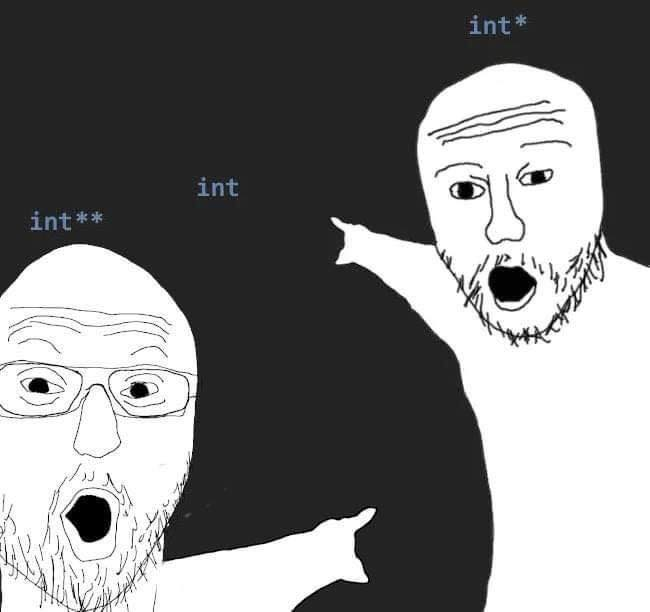

I know things that no human should have to carry the knowledge of

Do you understand the subtilities and the nuance of your language?

My soul is scarred by the nuanced minutia of many an RFC.

in the hope of reducing my imposter syndrome.

There’s but two types in software - those who have lived to see too much…and those who haven’t…yet.

After almost 12~15 years of programming in C and C++, I would give myself a solid “still don’t know enough” out of 10.

After almost 12~15 years of programming in C and C++, I would give myself a solid “still don’t know enough” out of 10.

That resonates so thoroughly.

And while it can 100% also be the case in any tool or language, it’s somehow 300% true for C and C++.

In C in particular, you have to be very cognizant of the tricky ways the language can screw you with UB. You might want to try some verification tools like Frama-C, use UB sanitizers, enable all the compiler warnings and traps that you can, etc. Other than that, I think using too many obscure features of a language is an antipattern. Just stick with the idioms that you see in other code. Take reviewer comments on board, and write lots of code so you come to feel fluent.

Added: the MISRA C guidelines for embedded C tell you to stay with a relatively safe subset of the language. They are mostly wise, so you might want to use them.

Added: is your issue with C or with machine code? If you’re programming small MCUs, then yes, you should develop some familiarity with machine code and hardware level programming. That may also help you get more comfortable with C.

Yeah, but they make me MISRAble.

My issue is with the imposter syndrome i’d say.

I don’t know asm on the tip of the fingers because today’s mcu are pretty full of features that makes it not useful most of the time, but if I need to whip up something in asm for whatever reason, I know the basics and how to search for documentation to help me.

I try to follow MISRA C guidelines because it’s pretty easy to follow and it gives tool to reduce mistakes.

I have enough experience to avoid many common pitfalls such as overflows, but for whatever reason, it always feel like I don’t know enough when I come across a tutorial or a post with a deep dive in a specific part of an embedded project or on the C language.

When I read these tutorials/posts, I understand what is being done, but I could not come to these conclusions myself, if that makes sense.

What are you working on and what kind of organization? Are you working with someone more senior? You could ask him or her for an assessment of where you should work on strengthening up.

You are in the right mindset if you are worried. Many C programmers greatly overestimate their ability to write bug-free or even valid (UB-free) code.

The AVR MCUs are pretty simple compared with 32 bit MCUs, so are good for asm coding.

Otherwise it’s a matter of coding til it’s reflexive.

Philip Koopman has written a book on MCU programming that sounds good. I haven’t seen it yet but someday. You might look for it: https://betterembsw.blogspot.com/2021/02/better-embedded-system-software-e-book.html?m=1

John Regehr’s blog is also good.

Thanks for your input.

I think I would like to follow all these people and their work on C, and their in depth knowledge. But free time is sparse, and I don’t have the mental energy when I do have some time.

As for my work, I work in a startup where I am the only one doing what I do. However, I have a lot of leeway in how I code, so I am always somewhat read on best practices. So I can’t really refer to a senior dev, but I can self-teach.

I think I coded enough that a lot of what I do is a reflex, and I often can approximate a first solution,but I have doubts all the time on how I implement new features. That makes it so that I am a slower coder and I really struggle to do fast prototyping.

I am aware enough of what I do well, and what I struggle, so there’s that.

Fair enough. If your product isn’t safety or security critical then it’s mostly a matter of getting it working and passing reasonable testing. If it’s critical you might look for outside help or review, and maybe revisit the decision to use C.

The book “Analysable Real-Time Systems: Programmed in Ada” was recommended to me and looks good. I have a copy that has been on my reading pile for ages. I was just thinking about it recently. It could be a source of wisdom about embedded dev in general, plus Ada generally fosters a more serious approach than C does, so it could be worth a look. I also plan to get Koopman’s book that I mentioned earlier.

A one out of ten. I consider myself the world’s second worst programmer.

By any chance, do you use a niche language that has only two programmers?

Nope. I’m just that bad. I feel like I have a logical mind but it just seems like the command don’t do what I think they will, won’t operate on a certain type of variable or Holy crap I forgot a friggin space or semi-colon or something.

Languages in order of proficiency: C++ HTML/CSS Matlab Basic Fortran (1 class taken)

But when I say proficient I seriously mean looking stuff up on the internet for every single line. And I haven’t used Basic in decades.

Who is the first?

The guy who was using my name to make code submissions 2-3 years prior.

Odds are the worst one is still using Twitter.

You mean the worst? I don’t know. I’m just hopeful that I’m not actually the worst. Fingers crossed.

Imposter syndrome is strong

Better than many, mediocre.

With my coworkers I’ve got a strange ability to pick up any language that tastes like c, and get stuff done. I’m sure I’ve confused our c# guys when I make a change to their code and ask for a code review, because I’ll chase down quality of life improvements for myself. (Generally, I will make the change and ask if I have any unintended side effects, because in an MCU, I know what all my side effects are, multi threaded application?, not at all)

Edit: coming from a firmware view, I’ve made enough mistakes to realize when order of operations will stab me, when a branch is bad because that pipeline hit will hurt, and I still get & vs && wrong more often than I would like to admit.

I just have to say “tastes like c” is a visceral way to say it. I approve.

I think I’ll never not make & &&, | || or = == operators mistakes. It’s so easy to go over it fast and not notice the mistakes.

I like developing MCU firmwares because there is limited amout of resources and you usually have direct control of what is running when.

I feel the better than many, but mediocre in my soul. I mean, I get paid to code, so I certainly have a good enough knowledge to do so. But I have the tendancy to undersell myself.

deleted by creator

I try to tell this to all young guns getting in.

The amount of information due to the dearth and depth of theory, practical, and abstraction I would need to where I’m comfortable enough to consider myself an expert would take a lifetime to learn.

Hence, it’s, “Stay in the dojo, young padawan!”

If you step in enough shit you eventually learn to realise when you are about to step in it again. I think the most knowledgeable people are those that have failed the most and found something helpful along the way, seems you are well on your journey so just keep steeping. At some point the abstractions you have control over become unreliable until you understand how they interact with lower level systems and the balance of control comes back because you know know the circumstances in which these abstractions work in your favour.

I should know more about what’s happening under the hood.

You’ve just identified the most important skill of any software developer, IMO.

The three most valuable topics I learned in college were OS design basics, assembly language, and algorithms. They’re universal, and once you have a grasp on those, a lot off programming language specifics become fairly transparent.

An area where those don’t help are paradigm specifics: there’s theory behind functional programming and OO programming which, if you don’t understand, won’t impeded you from writing in that language, but will almost certainly result in really bad code. And, depending on your focus, it can be necessary to have domain knowledge: financial, networking, graphics.

But for what you’re taking about, those three topics cover most of what you need to intuit how languages do what they do - and, especially C, because it’s only slightly higher level than assembly.

Assembly informs CPU architecture and operations. If you understand that, you mostly understand how CPUs work, as much as you need to to be a programmer.

OS design informs how various hardware components interact, again, enough to understand what higher level languages are doing.

Algorithms… well, you can derive algorithms from assembly, but a lot of smart people have already done a ton of work in the field, and it’s silly to try to redo that work. And, units you’re very special, you probably won’t do as good a job as they’ve done.

Once you have those, all languages are just syntactic sugar. Sure, the JVM has peculiarities in how its garbage collection works; you tend to learn that sort of stuff from experience. But a hash table is a hash table in any language, and they all have to deal with the same fundamental issues of hash tables: hashing, conflict resolution, and space allocation. There are no short cuts.

Any good resources you can share for/on each topic?

College.

I’m one of those folks who believes not everyone needs a degree, and we need to do more to normalize and encourage people who have no interest in STEM fields to go to trade schools. However, I do firmly believe computer programming is a STEM field and is best served by getting a degree.

There are certainly computer programming savants, but most people are not, and the next best thing is a good, solid higher education.

Thanks for the input, it will make me think about how to approach how to get the skills I need.

I’d say I am decent with FreeRTOS which is pretty much just a scheduler with a few bells and whistles.

I haven’t used assembly in a long while, so I know where to look to understand all the instructions, but I can’t tell right off the bat what a chunk of assembly code does.

Algorithms, I am terrible at these because I rarely use them. I haven’t worked in a big enough project where an algorithm is needed. I tend to work in finite state machine which is close to algorithms, but it’s not quite it. And a big part of my job is interfacing peripheral chips for other to use.

Thanks for the input

You’re welcome!

I haven’t used assembly in a long while, so I know where to look to understand all the instructions, but I can’t tell right off the bat what a chunk of assembly code does.

Oh, me neither. And that’s not what I think is necessary; what’s important is that you can generally imagine the sorts of operations which are going on under the hood for any given line of code. That there’s no magic “generate a hash for a string” CPU operation, and that, ultimately, something is going to be iterating over a series of memory locations and performing several math operations on each to produce a numeric output. I think this awareness is enormously valuable in developers, and helps them think about the code they’re writing in a certain way, and usually in a way that improves their code.

Algorithms, I am terrible at these because I rarely use them.

You use them all the time! Anything longer than a single operation is an algorithm.

Nobody is going to ask you to write a search function; however, being aware of Big-O notation, and being able to reason about time and space complexity, is important. On the backbend, it’s critical. It’s important if you’re a front end developer - I blame the whole NodeJS library fiasco on not enough awareness of dependency complexity by a majority of JS developers.

I tend to work in finite state machine which is close to algorithms, but it’s not quite it.

I’d absolutely call FSM work “algorithms”, and it sounds as if the projects you’re working on is where these fundamentals are most important. Interfaces between hardware components? It’s the most fraught topic in CIS! So. Many. Pitfalls. Shit, you probably have to worry about clock speeds and communication sheer; there’s absolutely a huge corpus of material about algorithms for handling stuff you’re working with, like vector clocks. That’s a fabulous, interesting field. It’s also super tedious, and requires huge attention to detail which I lack, so in a way I envy you, but an also glad I’m not you.

After 6 years of seriously using Python regularly, I’d probably give myself a 6/10. I feel comfortable with best practices and making informed design decisions. I have no problem using linting and testing tools. And I’ve contributed to large open source projects. I could improve a lot by learning more about the standard library and some core computer science concepts that inform the design of the language. I’m pretty weak in web frameworks too, unfortunately.

After 3-4 years of using python I’m bumping you up to a 7 so I can fit in at a 5. Congrats on your upgrade. I’ve never contributed to open source but I’ve fixed issues in publocly archived tools so that they aren’t buggy for my team. I can see errors and know what likely caused them and my code literacy is decent. That being said, I think I’m far from advanced.

8/10 Server-side JavaScript

7/10 Ampscript

3/10 SQL

There is something about SQL that I can’t get to click with me. I can run basic queries and aggregation, but I can never get nested queries to work right.

All of these also assume I have access to documentation. Without documentation, all of them are like a 2. 🤷

I have advice that you didn’t ask for at all!

SQL’s declarative ordering annoys me too. In most languages you order things based on when you want them to happen, SQL doesn’t work like that- you need to order query dyntax based on where that bit goes according to the rules of SQL. It’s meant to aid readability, some people like it a lot,but for me it’s just a bunch of extra rules to remember.

Anyway, for nested expressions, I think CTEs make stuff a lot easier, and SQL query optimisers mean you probably shouldn’t have to worry about performance.

I.e. instead of:

SELECT one.col_a, two.col_b FROM one LEFT JOIN (SELECT * FROM somewhere WHERE something) as two ON one.x = two.xyou can do this:

WITH two as ( SELECT * FROM somewhere WHERE something ) SELECT one.col_a, two.col_b FROM one LEFT JOIN two ON one.x = two.xEspecially when things are a little gnarly with lots of nested CTEs, this style makes stuff a tonne easier to reason with.

I’m 100% going to try this, but I have a feeling that it isn’t going to work in my application. Salesforce Marketing Cloud uses some pared-down old version of Transact-SQL and about half of the functions you’d expect to work just flat out don’t.

The joys of using a Salesforce product.

Oh boy, have fun! CTEs have pretty wide support, so you might be in luck (well at least in that respect, in all other cases you’re still using saleforce amd my commiserations are with you)

Salesforce just gives me the other kind of CTE.

I loathe debugging ampscript and anything to do with marketing cloud with a passion…

Wrap the Ampscript in an ssjs try/catch block and debug all your shit on a cloudpage. ;)

Everyone that works in SFMC for an extended period of time hates SFMC. Or at least has a love hate relationship with it. I think Salesforce is the most worthless company in existence and John Mulaney’s anti-SF rant at Dreamforce brought a little light to my life.

I very rarely actually use Ampscript anymore. Almost everything is done in ssjs in my instance. Thank fuck I’m not consulting anymore and don’t have to deal with other company’s stuff.

I’m probably at about a 1/10 in ampscript. I just don’t use it enough. I tried something like what you are describing but it didn’t work very well. Trying to debug ampscript that runs in an email template at send time by copying into a cloud page and then trying to mimick the various properties only available at send time was just maddening. I can’t comprehend how Salesforce bought such a buggy and poorly thought through piece of junk. It’s a coin toss whether some of the main menus even load half the time. Ergh…

Yeah, you still have to draw in all those values through lookups or just set the variables manually but if you keep getting a failed send or that shitty 500 error on a cloudpage, the try/catch block prevents it and will actually display the error. Should look something like this:

<script runat=“server”> Platform.Load(“Core”,“1.1.1”); try{ </script>

%%[ your AMPscript block goes here ]%%

<script runat=“server”> }catch(e){ Write(Stringify(e)); } </script>

SFMC is Salesforce’s red headed stepchild. The product has been neglected into the ground and they keep shoehorning random shit into it then neglecting that, too. Ad Studio, Social Studio, and Interaction Studio were all different things they bought and slapped a coat of SF branded paint on then let die. It is such a weird product but EVERYONE has it and it gives me pretty good job security knowing how to make it function about half the time.

The more I learn about my language the less I think it matters. Maybe in embedded C you can’t just leave everything to the compiler though.

It’s a strong typed language with a minimal set of guard rails, so there is certainly some considerations to take into account, but the compiler are pretty good and give more leeway to the dev.

Not every thing, but still most things by far.

I got pretty good with BASIC back in 1983.

Nice!

I’m still struggling to get good at BASIC, myself.

BASIC was my first language, and I still don’t feel like I’ve mastered it, so I still study it on some weekends.

I take so many modern tools for granted, now. It makes my learning progress in BASIC feel slow.

But I’m getting better at it.